Articles /Natural Language Processing /Part 6

Agents, Reasoning, and Compound AI

Tool use, multi-step reasoning, and the shift from single models to AI systems.

NLPAgentsReasoningCompound AIPython

Articles /Natural Language Processing /Part 6

NLPAgentsReasoningCompound AIPython

A single model call has hard limits: finite context windows, no ability to take actions, and no way to verify its own outputs. The shift from "better models" to "better systems" reflects a recognition that the most capable AI applications are not monolithic; they are compositions of models, tools, and retrieval systems orchestrated into multi-step pipelines. The transformer did not solve natural language processing. It provided the core component around which a new class of systems could be built.

Giving a language model access to external tools (search engines, calculators, databases, APIs) transforms it from a closed system into an open one. Function calling lets the model emit structured requests that an orchestration layer executes, returning results back into the model's context. The model decides when and how to use tools; the tools provide capabilities the model lacks, such as precise arithmetic, real-time data access, and interaction with external systems.

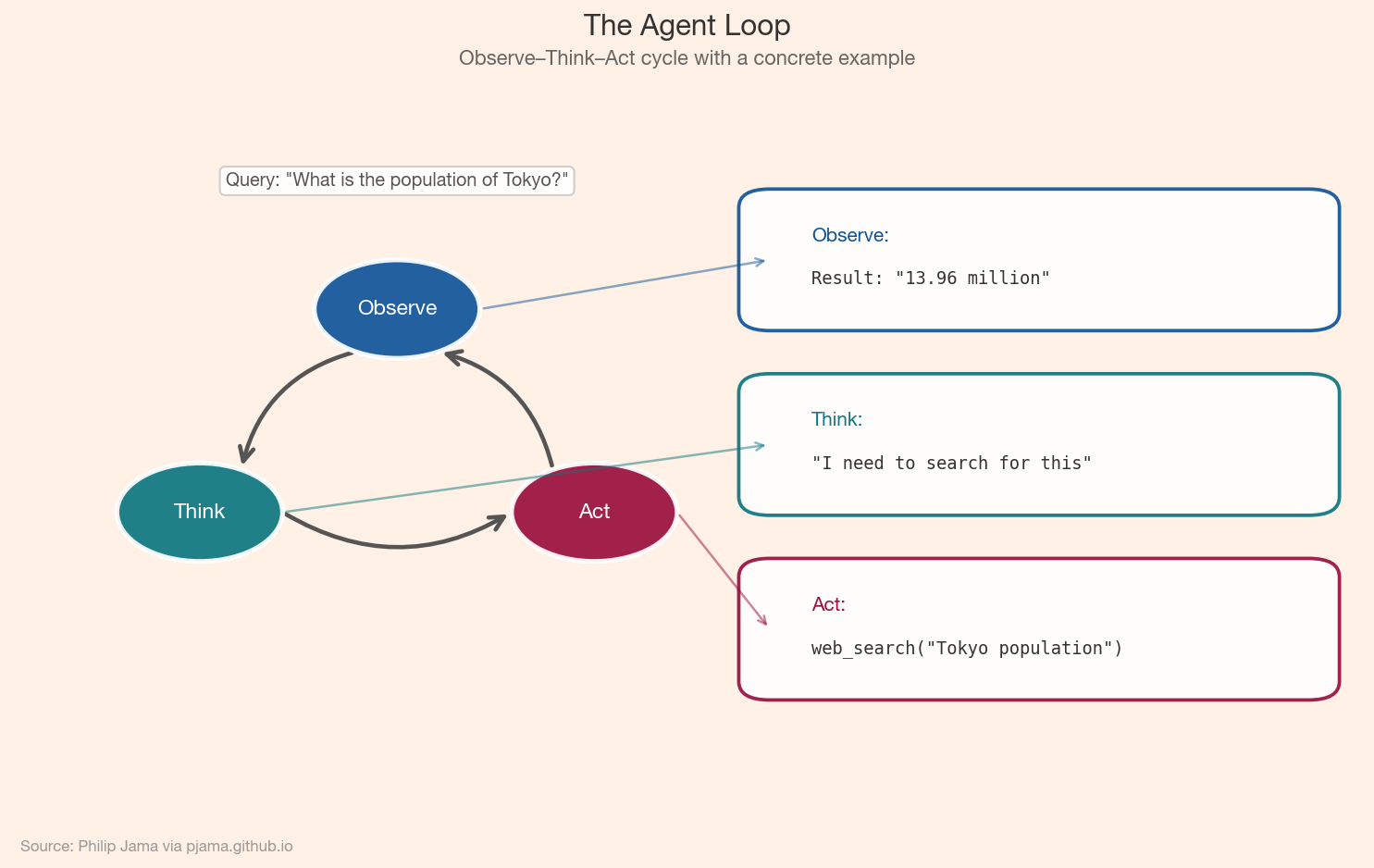

An agent operates in a loop: observe the current state, reason about what to do next, take an action, and observe the result. This cycle repeats until the task is complete or a stopping condition is met. The simplest agents are ReAct-style loops (reason, act, observe), but more sophisticated architectures include planning, reflection, and self-correction stages. The key insight is that multi-step execution lets agents tackle tasks that no single model call could handle.

The diagram below shows the observe–think–act cycle at the core of every agent, annotated with a concrete example of answering a factual question.

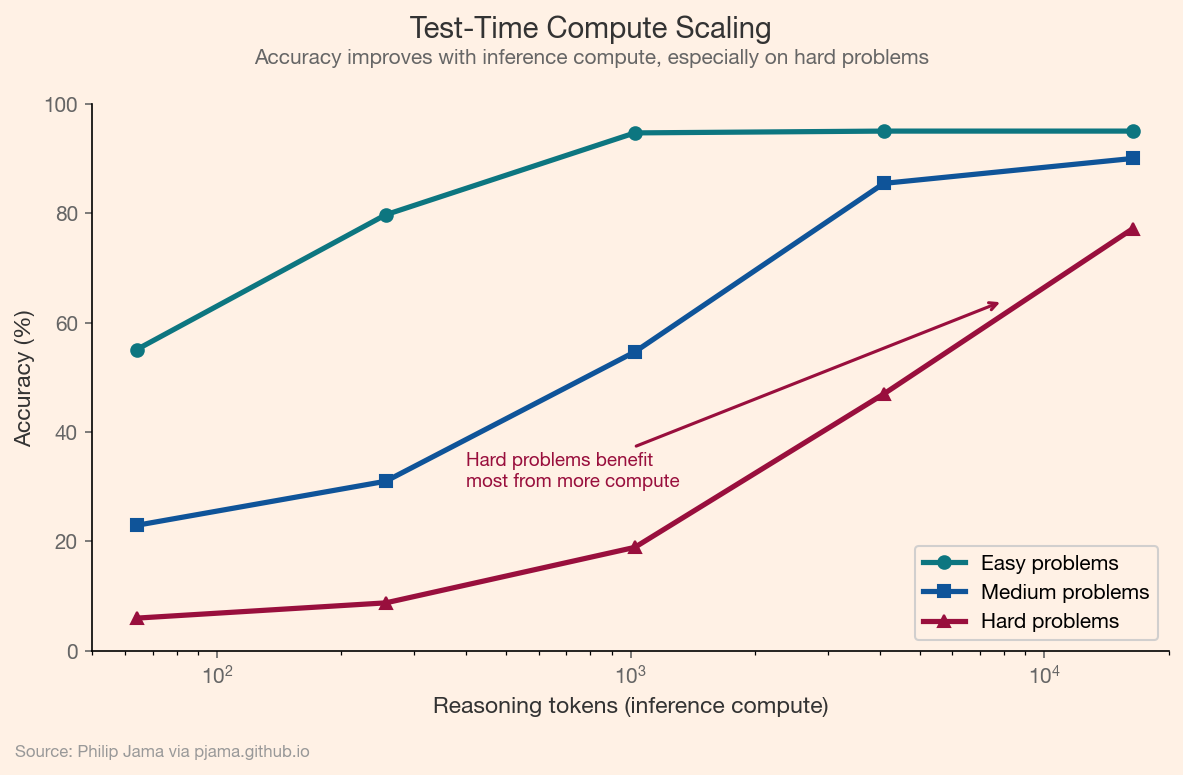

Chain-of-thought prompting (Part 3) demonstrated that generating intermediate reasoning steps improves accuracy. Test-time compute scaling generalizes this: spending more computation at inference, through longer reasoning traces, tree-of-thought search, or self-verification, trades inference cost for accuracy on hard problems. The scaling relationship between inference compute and task performance mirrors the training-time scaling laws, suggesting a complementary axis for improving capabilities.

The chart below shows how accuracy scales with inference compute across problem difficulties — easy problems plateau quickly, while hard problems continue to benefit from longer reasoning traces.

Production AI applications increasingly combine multiple components: a retriever for knowledge grounding (Part 4), an aligned language model for generation (Part 5), tool integrations for action, and orchestration logic for control flow. These compound AI systems are more than the sum of their parts: the retriever compensates for the model's knowledge gaps, the model compensates for the retriever's lack of synthesis, and the orchestration layer manages the interaction between components.

Multi-hop fidelity (how information degrades as it passes through multiple processing stages) becomes a central concern in compound systems. Each retrieval, summarization, and generation step introduces potential for signal loss. For a detailed analysis of this phenomenon, see Fidelity in LLM Information Processing.

Coding assistants are among the most mature agent applications. They combine code generation with tool use (executing code, reading files, searching documentation) and iterative reasoning (debugging failures, refining solutions). The agent writes code, runs it, observes the output, and adjusts: the same loop a human developer follows, compressed into seconds. Autonomous research agents extend this pattern to broader domains: searching the web, reading papers, synthesizing findings, and producing structured outputs.

The "unsolved problem" of Part 1 — language is ambiguous, context-dependent, and vast — remains unsolved. But the nature of the problem has fundamentally changed. The question is no longer whether machines can process language competently. It is how to compose, ground, and align the systems that do.

If you're exploring related work and need hands-on help, I'm open to consulting and advisory. Get in touch›