Articles /Decision Science /Part 2

How the Bayesian Approach Improves Sample Efficiency

Prior knowledge, adaptive designs, and why you need fewer participants

BayesianRCTA/B TestingExperimentation

Articles /Decision Science /Part 2

BayesianRCTA/B TestingExperimentation

In online randomized controlled trials (RCTs), the Bayesian approach offers significant advantages in sample efficiency, meaning you can often reach reliable conclusions with fewer participants or observations compared to traditional frequentist methods. This efficiency stems from two core features of Bayesian statistics: the ability to incorporate prior knowledge and the flexibility of adaptive designs. This article expands on the foundations introduced in Online Experiments with a Bayesian Lens.

The primary ways Bayesian methods lead to greater sample efficiency are:

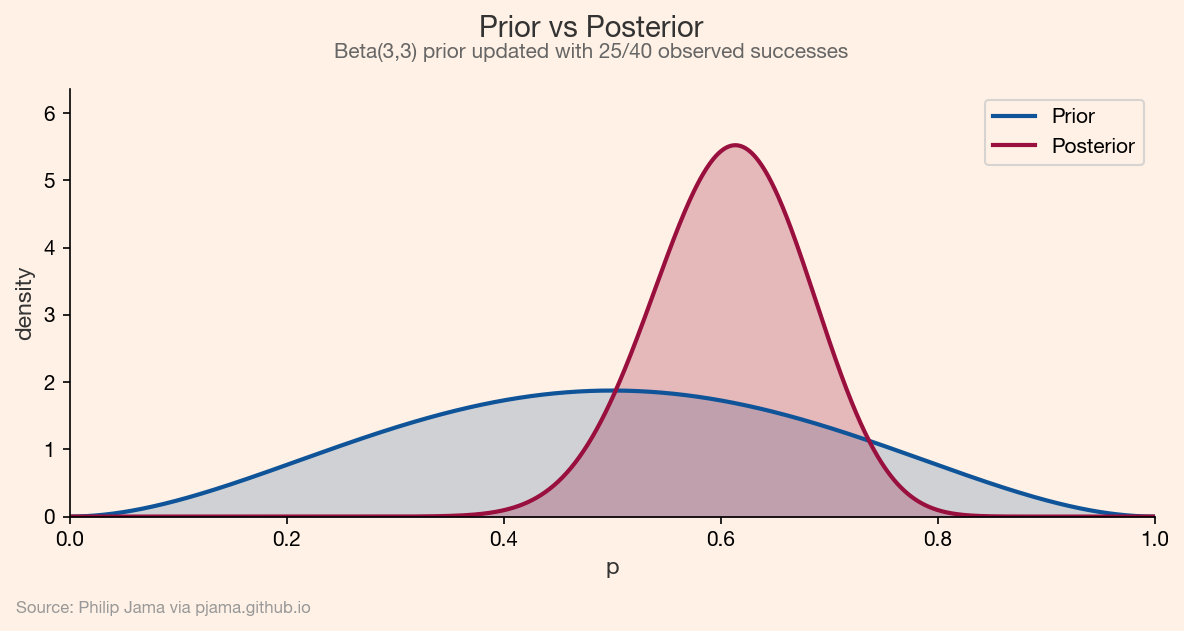

Before an experiment begins, you often have existing information from previous studies, expert opinion, or related data. The Bayesian framework allows you to formally incorporate this "prior" information into your statistical model. This means the experiment doesn't start from a state of complete ignorance. By providing a head start, this prior information can reduce the amount of new data needed to reach a confident conclusion.

Bayesian methods are naturally suited for adaptive trial designs. This means you can monitor the results as the data comes in and modify the experiment on the fly. A key advantage here is the ability to stop the experiment early if the evidence strongly favors one variation over another. This is in contrast to frequentist approaches, where peeking at the data and stopping early can invalidate the results. The ability to conclude an experiment as soon as a meaningful result is apparent can save significant time and resources. For example, if a new feature is clearly underperforming, you can stop the experiment and avoid exposing more users to a negative experience.

In more advanced adaptive designs, you can dynamically allocate more participants to the better-performing variation. This "multi-armed bandit" approach can maximize the positive impact of the experiment while it's still running.

A prior is a probability distribution that represents your beliefs about an unknown parameter before you've seen the data from your experiment. It's a way to quantify your initial understanding. Priors can be:

The process involves combining this prior with the likelihood (the evidence from your current experiment's data) to produce a posterior distribution. The posterior represents your updated beliefs about the parameter, incorporating both your prior knowledge and the new evidence.

Beyond sample efficiency, the Bayesian approach offers several other advantages for running online experiments:

Bayesian analysis provides results that are often easier for non-statisticians to understand. Instead of p-values and confidence intervals, which can be counterintuitive, a Bayesian experiment can give you a direct probability statement, such as "There is a 95% probability that variation B is better than variation A." This clarity helps stakeholders make more confident and informed decisions.

The Bayesian framework is highly flexible and can be applied to a wide range of experimental designs. It can handle complex scenarios with multiple variations, and it excels at adaptive designs where the experiment is modified in real-time based on incoming data. This adaptability allows for more efficient and ethical testing.

A common challenge with frequentist methods is that a "non-significant" result doesn't necessarily mean there's no difference between variations; it just means you failed to find one. Bayesian methods, on the other hand, can provide evidence in favor of the null hypothesis, which can be valuable for understanding when a change has no meaningful effect.

The ability to incorporate prior knowledge makes Bayesian methods particularly powerful when dealing with limited data, such as in experiments on low-traffic pages or with rare events.

In essence, Bayesian methods provide a more dynamic and intuitive framework for online experiments, often leading to faster and more efficient learning cycles.

Bayesian updating tells you what to believe, but not what to do next. Multi-armed bandits use those beliefs to balance exploration and exploitation in real time.

If you're exploring related work and need hands-on help, I'm open to consulting and advisory. Get in touch›