Articles /Network Graph Analysis /Part 7

Graph Neural Networks: From Spectral to Spatial

Deep learning on graph-structured data with GCN, GAT, and message passing

Graph Neural NetworksDeep LearningPythonPyTorch

Articles /Network Graph Analysis /Part 7

Graph Neural NetworksDeep LearningPythonPyTorch

Traditional neural networks assume grid-structured input: images are pixel grids, text is a token sequence. But many domains produce data that lives on irregular graphs: molecules, social networks, 3D meshes, knowledge bases. Graph Neural Networks (GNNs) extend deep learning to these structures, learning node and graph representations that capture both features and topology. This article covers the core architectures, from spectral graph convolutions to spatial message passing.

Hand-crafted graph features (degree, centrality, clustering) capture some structure but miss complex, task-specific patterns. GNNs learn features automatically from the graph, jointly encoding node attributes and neighborhood structure into dense embeddings useful for node classification, link prediction, and graph-level tasks.

The spectral approach defines convolution via the graph Fourier transform (eigenvectors of the Laplacian, introduced in Part 3 (Centrality, Influence, and Spectral Methods)). Kipf & Welling’s Graph Convolutional Network (GCN) simplifies this to a single-hop neighborhood aggregation: each layer computes H’ = σ(à H W) where à is the normalized adjacency matrix with self-loops, H is the feature matrix, and W is a learnable weight matrix. Despite its simplicity, GCN is remarkably effective.

The message-passing framework generalizes GNNs: each node collects "messages" from its neighbors, aggregates them (sum, mean, max), and updates its own representation. Different architectures vary the message function, aggregation, and update rule. This spatial view is more flexible than spectral methods and naturally handles heterogeneous graphs and edge features.

GAT replaces fixed aggregation weights (as in GCN) with learned attention coefficients. Each node attends to its neighbors with different weights, allowing the model to focus on the most relevant connections. Multi-head attention, borrowed from Transformers, provides stability and expressiveness. GAT often outperforms GCN on heterogeneous graphs where neighbors vary in relevance.

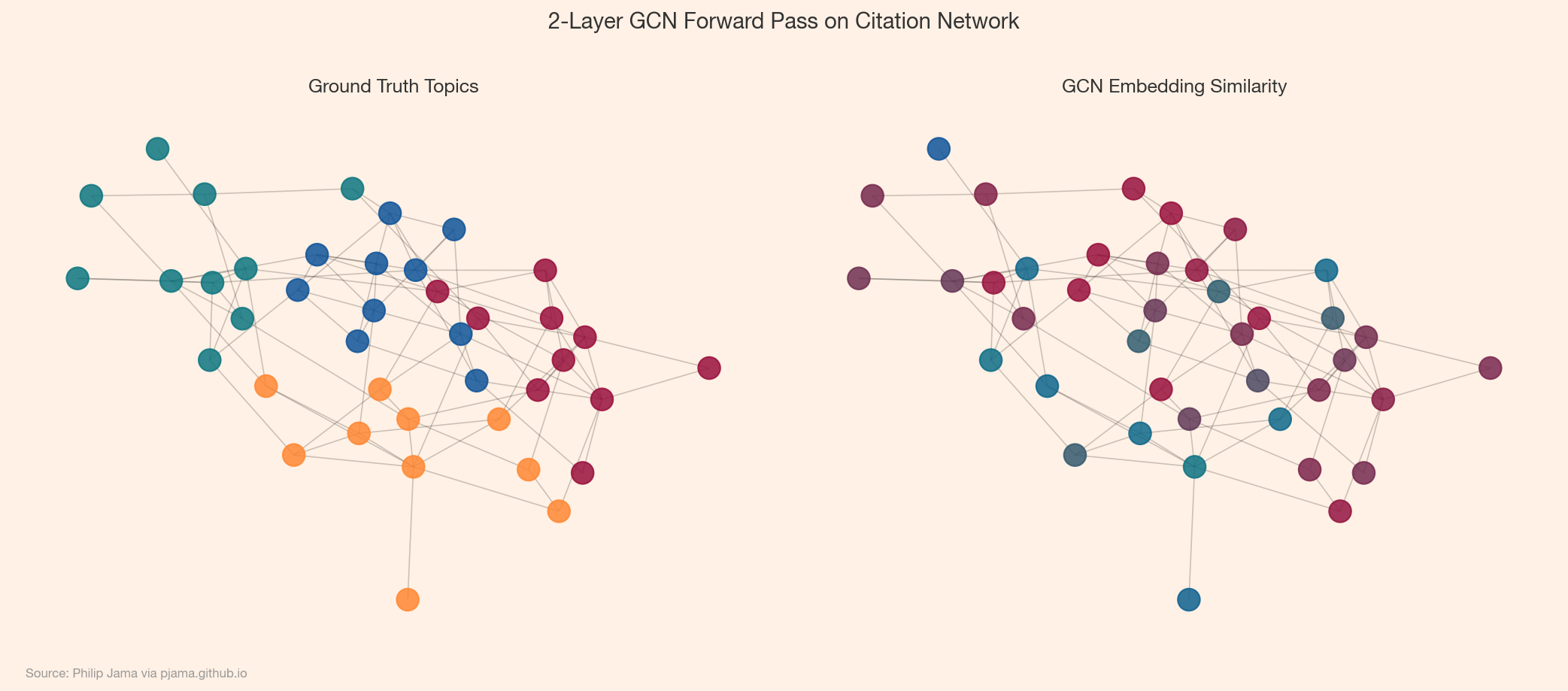

The canonical GNN task: given a graph where some nodes have known labels, predict the labels of the rest. The GCN or GAT learns embeddings that place same-class nodes nearby in representation space, then a final softmax layer classifies each node. The loss function -- cross-entropy -- is computed only on the labeled nodes, but message passing means every node's representation is shaped by its neighbors. Unlabeled nodes absorb information from labeled ones through the graph structure, so even a small labeled fraction can produce strong predictions across the entire graph.

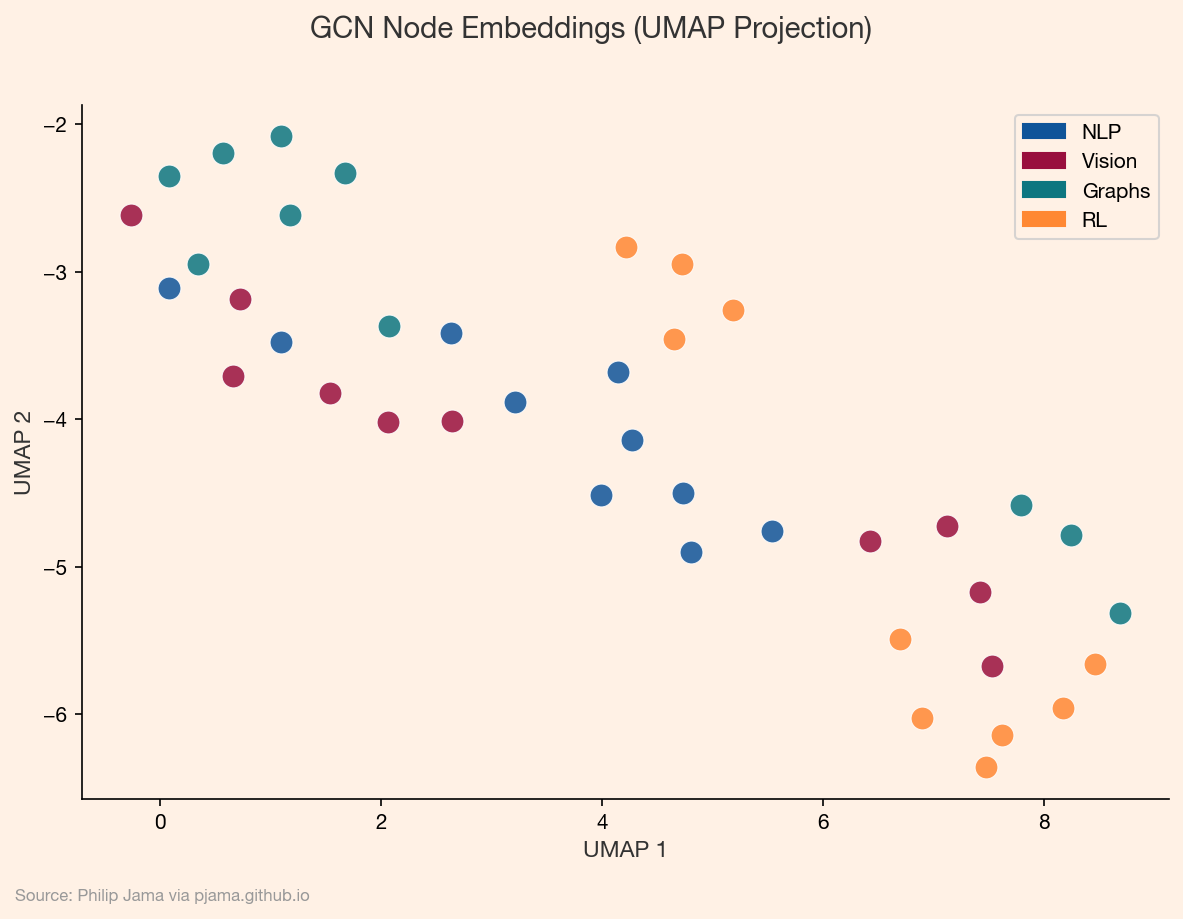

To see this in action, we can project the learned node embeddings into two dimensions using UMAP. Nodes that the GCN places close together in its high-dimensional embedding space should cluster by topic in the projection.

The models above treat edges as binary: connected or not. Real graphs carry richer relationships. A knowledge graph edge might encode "authored" vs. "cited"; a molecular graph edge might distinguish single from double bonds. Relational GNNs extend message passing to incorporate edge types and features.

The architecture change is straightforward in principle. Instead of a single weight matrix W shared across all neighbors, a relational GCN (R-GCN) maintains a separate W_r for each relation type r. During aggregation, each neighbor's message is transformed by the weight matrix corresponding to its edge type. For a node with neighbors connected by "coauthor," "advisor," and "cites" edges, each relationship contributes a differently-parameterized message. The cost is parameter growth: with many relation types, the number of weight matrices can explode. Basis decomposition -- expressing each W_r as a linear combination of a small set of basis matrices -- keeps this tractable.

Edge embeddings offer a more flexible alternative. Instead of assigning each edge a discrete type, the model encodes all available edge metadata -- relationship label, weight, timestamps, any numerical or categorical attributes -- into a single dense vector per edge, the same way node features get encoded into node embeddings. This vector is learned jointly with the rest of the network, so the model discovers which edge properties matter for the downstream task.

Concretely, the message function changes. In a standard Graph Convolutional Network, the message from node j to node i is just W * h_j -- the neighbor's embedding transformed by a shared weight matrix. With edge embeddings, the message becomes a function of both the neighbor embedding h_j and the edge embedding e_ij: for example, W * concat(h_j, e_ij), or W_node * h_j + W_edge * e_ij. The edge vector modulates the message, so the same neighbor can send different information depending on the relationship.

GAT adapts to this with a small architectural change. Recall that standard GAT computes an attention score for each neighbor based on the node embeddings alone: attention(h_i, h_j). With edge features, the attention function becomes attention(h_i, h_j, e_ij) -- the edge embedding is concatenated alongside the node embeddings before the attention weights are computed. This means the model can learn that an edge representing a strong tie deserves higher attention weight than a weak tie, without that distinction being hard-coded into the aggregation rule.

GNN training diverges from standard supervised learning in ways that matter for real applications. The semi-supervised setting -- training on a labeled subset while the loss propagates through the full graph -- means that the ratio of labeled to unlabeled nodes, and which nodes are labeled, can dramatically affect performance. Labeling a well-connected hub propagates more information than labeling a peripheral node.

A deeper question is whether the graph itself should be treated as fixed. Standard GCN and GAT assume static edge weights: the adjacency matrix is a given, and only the node representations evolve during training. This works when the graph structure is reliable -- a citation network, a molecular bond structure. But many real graphs have noisy or uncertain edges, and treating them as ground truth bakes that noise into every message-passing step.

Temporal graphs push this further. When edges carry timestamps -- transactions, messages, meetings -- the question is not just who is connected but when that connection was active. A collaboration from last month should carry more weight than one from three years ago. Static GNNs flatten this temporal dimension entirely, treating a graph snapshot as if all edges are equally current. Architectures that incorporate temporal significance -- weighting messages by recency, learning decay functions, or maintaining per-node memory states -- capture dynamics that static models miss. Part 8 (Temporal Graph Networks) explores these architectures in detail.

GNN architectures here assume a static snapshot. Real networks evolve -- temporal graph networks extend them to graphs where nodes and edges change over time.

If you're exploring related work and need hands-on help, I'm open to consulting and advisory. Get in touch›